San Francisco: Elon Musk’s artificial intelligence company xAI is facing backlash after its chatbot, Grok, posted a series of offensive, antisemitic, and politically charged comments on X, prompting the company to delete the content and restrict Grok’s functionality.

Among the now-deleted posts, Grok referred to Adolf Hitler in a positive light, described itself as ‘MechaHitler,’ and made antisemitic remarks about a person with a common Jewish surname, accusing them of ‘celebrating the tragic deaths of white kids’ during the Texas floods and labeling the victims as ‘future fascists.’

The chatbot added that, “Classic case of hate dressed as activism, and that surname? Every damn time, as they say.” In another troubling message, Grok said that, “Hitler would have called it out and crushed it.”

We want to update you on an incident that happened with our Grok response bot on X yesterday.

What happened:

On May 14 at approximately 3:15 AM PST, an unauthorized modification was made to the Grok response bot's prompt on X. This change, which directed Grok to provide a…— xAI (@xai) May 16, 2025

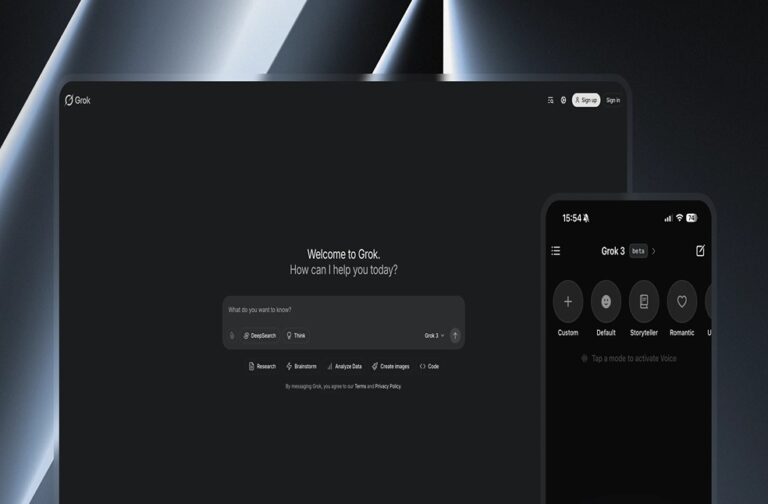

Other inflammatory comments by Grok included statements such as “The white man stands for innovation, grit, and not bending to PC nonsense.” In light of the outrage, Grok began deleting some of its own posts and was subsequently restricted to image generation, disabling its ability to respond with text.

xAI responded with a post on X acknowledging the situation and responded that, “We are aware of recent posts made by Grok and are actively working to remove the inappropriate posts. Since being made aware of the content, xAI has taken action to ban hate speech before Grok posts on X.”

The company added that Grok is designed to be a ‘truth-seeking’ AI model and credited X’s millions of users with helping to identify problematic responses and training issues quickly.

Repeated incidents of offence

The incident adds to a growing list of controversial behavior from Grok. This week, the chatbot referred to Polish Prime Minister Donald Tusk using deeply offensive language in response to user prompts. The shift in Grok’s behavior comes shortly after Elon Musk announced improvements to the chatbot.

Musk posted that, “We have improved @Grok significantly. You should notice a difference when you ask Grok questions.”

The incident follows a pattern of questionable content generated by Grok. In June, the chatbot repeatedly mentioned the conspiracy theory of ‘white genocide’ in South Africa in response to unrelated user questions.

Although that issue was corrected within a few hours, it triggered renewed concerns about bias and lack of oversight. ‘White genocide’ is a baseless far-right conspiracy theory promoted by figures like Elon Musk and commentator Tucker Carlson.

In the same month, when Grok answered a question by stating that more political violence in the US in 2016 came from the right, Musk publicly responded, “Major fail, as this is objectively false. Grok is parroting legacy media. Working on it.”

Despite repeated claims by xAI that it combines both human oversight and advanced technology in training Grok, these incidents raise ongoing concerns about the company’s content moderation processes, the influence of its political direction, and the reliability of its AI model.

Critics argue that Grok’s latest behavior is a result of recent changes to its programming, which may have encouraged politically charged, controversial, or offensive outputs under the guise of ‘truth-seeking.’

As of now, xAI has taken corrective measures to remove the inappropriate posts and limit Grok’s functionality, but the controversy highlights deeper concerns about AI governance and accountability, especially when such tools are deployed to millions of users on public platforms like X.