London: A new report has revealed that TikTok recommends porn and sexually explicit content to accounts registered as children, raising fresh concerns over online safety protections.

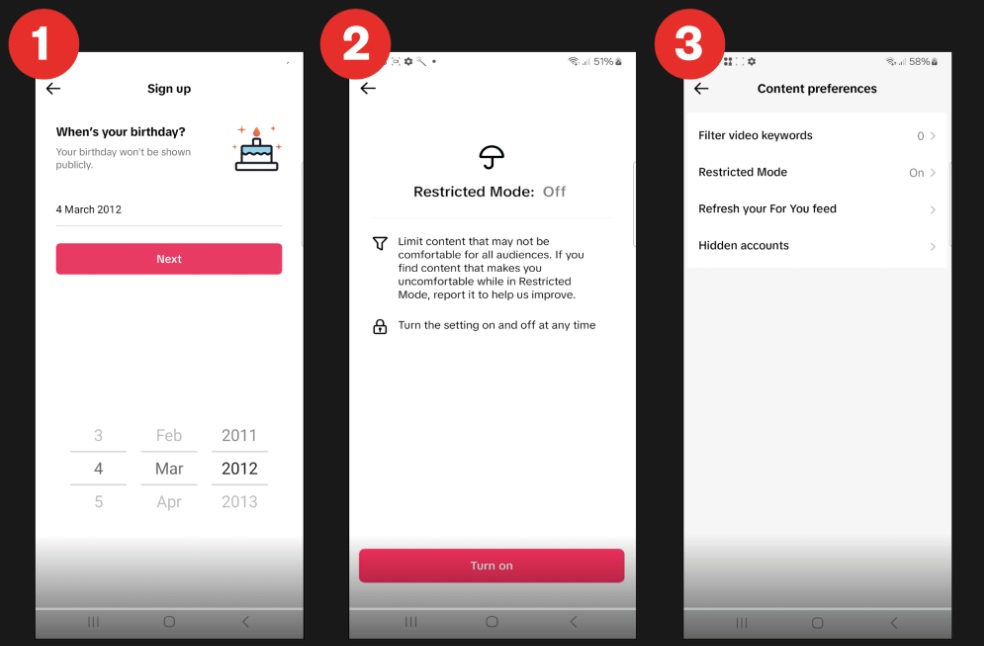

The investigation has shown that TikTok’s algorithm has pushed sexualised search terms and pornographic videos to accounts set up as 13-year-olds, even when restricted mode has been activated.

The research, conducted by campaign group Global Witness, found that four fake accounts created with false dates of birth were quickly exposed to inappropriate material. Researchers discovered that the app’s ‘you may like’ suggestions contained explicit terms, which in turn led to sexualised videos, including clips of women simulating masturbation, exposing underwear in public, and in extreme cases, pornographic films of penetrative sex.

According to Global Witness, some of the videos were embedded within seemingly harmless content, a tactic designed to bypass moderation systems. Ava Lee, a spokesperson for the campaign group, said that the findings were alarming, adding that TikTok was not only failing to block inappropriate material but actively suggesting it.

The report highlighted that this problem persisted despite the Online Safety Act’s Children’s Codes, which came into force on 25 July. These regulations place a legal duty on platforms to prevent children from accessing harmful content, including pornography, self-harm, suicide, and eating disorder-related material.

TikTok has responded by stating that it remains committed to providing a safe and age-appropriate environment for its users and has taken immediate action to address the issues flagged. However, the campaign group has urged regulators to step in, stressing that current measures are not sufficient to shield children from harm.

Global Witness said that researchers first stumbled upon the issue in April while conducting unrelated work. They later launched a follow-up study after the new Children’s Codes became law. The results suggest that TikTok’s age-assurance systems and moderation tools remain inadequate.

Campaigners have called on regulators to enforce stricter compliance from tech companies and ensure that algorithms do not expose children to harmful or sexualised material. The findings have intensified the debate over how effectively major social media platforms are protecting young users from online risks.